By: Cminds

Let's start with you being a business owner, marketer, or developer, Web Vitals will be the best help for you to quantify the experience of your site and identify opportunities to improve.

May 2020, Google announced that Core Web Vitals are combining with our existing signals for page experience. By November 2020, Google revealed that this would take effect in May 2021.

What is Core Web Vital?

By: Medium

Core Web Vitals are specific factors that Google considers important in a webpage’s overall user experience. Measures a website’s loading, interactivity, and visual stability. This is something we know that these three are important to speed up in one way or another.

By: Seo Hacker

Web Vitals initiative aims to simplify the landscape and help sites focus on the metrics that matter most, the Core Web Vitals. Google said Page Experience will be an important update, but also the auditing process should be detailed and accurate.

Here are the important metrics that need to be analyzed in a Core Web Vitals audit:

- Largest Contentful Paint (LCP)

- First Input Delay (FID)

- Cumulative Layout Shift (CLS)

Largest Contentful Paint (LCP)

What is measured is the largest image or block of the context within the user viewport. Anything that extends beyond the screen does not count.

By: RockContent

Largest Contentful Paint (LCP) measures how fast the content becomes visible to the user and measures when the largest content element in the viewport becomes visible.

LCP should occur within 2.5 seconds of when the page first starts loading or a maximum of 4 seconds to avoid a “poor” score (although between 2.5 and 4 seconds still “needs improvement”).

LCP is an easy metric to understand because all you have to do is look at your web page and determine what the largest text block or image is and then optimize it by making it smaller or removing anything that would prevent it from downloading quickly.

First Input Delay (FID)

We all know how important it is to make a good first impression. It's important when meeting new people, and it's also important when building experiences on the web.

By: Seo Hacker

First Input Delay (FID) measures the time from when a user first interacts with your site (i.e. when they click a link, tap on a button, or use a custom, JavaScript-powered control) when the browser is actually able to respond to that interaction. FID should have less than 100 milliseconds or a maximum of 300 milliseconds to avoid a “poor” score (although between 100 and 300 milliseconds still “needs improvement”).

First Input Delay (FID) metric helps measure your user's first impression of your site's interactivity and responsiveness.

Cumulative Layout Shift (CLS)

A shift in a layout can occur any time when a visible element changes its position from one rendered frame to the next.

By: SEO Hacker

Cumulative Layout Shift (CLS) measures the sum total of all individual layout shift scores for every unexpected layout shift that occurs during the entire lifespan of the page. CLS should be less than 0.1 or a minimum of 0.25 to avoid a “poor” score (although between 0.1 and 0.25 still “needs improvement”).

The cumulative Layout Shift (CLS) metric helps you address this problem by measuring how often it's occurring for real users.

How to use Screaming Frog to Audit Core Web Vitals?

We have known that Core Web Vitals are a set of real-world metrics Google will use (in May 2021) to measure key aspects of user experience when loading a webpage.

To start an audit you'll need to:

- Use the paid version of the Screaming Frog website crawler.

- PageSpeed Insights API key

- The domain of the website you are auditing.

This audit process I have put together is designed to provide real details, examples, and data to work with when tackling Core Web Vitals issues.

Step 1

At first, you’ll need to Connect your PageSpeed Insights API key to Screaming Frog. You will be able to access PageSpeed Insights data and recommendations on a page-by-page basis.

- With your PageSpeed Insights API key in hand, open up Screaming Frog and navigate to Configuration > API Access > PageSpeed Insights.

- Paste your API key into the “Secret Key” box.

- Click “Connect.”

You’ll only get a limited number of PageSpeed Insights queries (around 25,000 per day) which should be enough for smaller sites, but for larger sites, you will be able to apply learnings from the pages you do get queries for to the rest of the site.

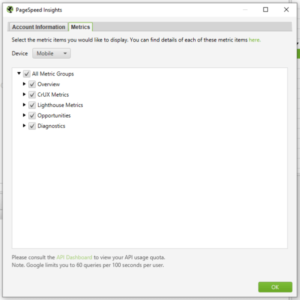

4. Click on “Metrics.”

5. Select “All Metric Groups,” but you can choose just the ones you want to report on

6. Click “OK.”

Metrics have groups available:

- Overview - Provides general overview information for the page, such as the page and the potential load savings that could be made on the page.

- CrUX Metrics – Data from the Chrome User Experience Report. If field data is available from real-life, opted-in users, it will appear here.

- Lighthouse Metrics – Most of the lab data we use within the audit comes from here, including LCP, TBT, and CLS scores.

- Opportunities – Provides suggestions for page speed improvements specific to each page.

- Diagnostics – Provides additional information about the overall performance of the website being crawled.

Step 2

Next, you’ll need to start your Crawl the website.

7. Copy the domain of the website you are crawling and paste it into the box at the top of the crawler that says “Enter URL to spider.”

As the site is crawled, you’ll notice that there is both a “Crawl” and an “API” progress bar in the top right-hand corner.

![]()

8. You’ll need to wait for both of these to reach 100% before you start analyzing your data.

Step 3

The next step is to Report the size of the problem and communicate the extent of the problem. To do this, you need to look at what percentage of pages fail each Core Web Vitals minimum thresholds.

9. In the top navigation bar, select “PageSpeed” and then “Export.”

10. Click on the PageSpeed Tab as this will show all discovered URLs which have speed data reported from the API.

Looking at the exported data, find the following columns and filter accordingly:

- Largest Contentful Paint Time (ms) – Filter to find all pages with LCP of 4000ms or more.

- Total Blocking Time (ms) – Filter to find all pages with TBT of 300ms or more.

- Cumulative Layout Shift – Filter to find all pages with CLS of 0.25 or more.

11. Add this data to a separate datasheet so that you or your client can easily view the pages that fail each Core Web Vital.

You can then report on a percentage of pages on the site that fail each Core Web Vitals minimum threshold.

Step 4

Report the issues specific to each page and make appropriate recommendations

12. On the right-hand side, in the “Overview” tab, scroll down to “PageSpeed.”

Here you will find the list of issues/recommendations relating to page speed and for the most part, Core Web Vitals. If there's any unfamiliar with it, search for it on the Web.dev website to get more information.

13. Click on an issue to see the pages affected, and export them to save into your datasheet.

In the example below, you are now reporting on the specifics of how many pages are impacted by a particular issue, and the URLs of affected pages.

Using the exported data for each issue, you can now add up the potential savings for each issue, and the average savings that could be made per page by resolving that issue, now you can make your recommendations for which issues to tackle first based on the amount of potential load savings that can be made.

Step 5

By Reporting on examples of the issues specific to each page, we provide a more granular dataset. Allows the client/developers to quickly understand what the issue is and whether it is something that can be resolved or not.

14. You now need to select one of the URLs affected by this issue, select the “PageSpeed Details” tab in the bottom navigation bar.

In the bottom left panel shows page speed information relevant to the selected page. Navigate to Opportunities > Eliminate Render Blocking Resources.

After you have pulled this data together for every issue on the site, you can provide a written report with recommendations for each issue.

Step 6

The questions I am often asked are around how this update is going to impact rankings.

Once changes have been made, crawl the site again and compare. Excellent content that is able to meet or exceed Core Web Vitals minimum thresholds seeing a slight improvement in rankings, which will, of course, mean slight drops in rankings for the competitors that they overtake.

While we can’t predict exactly what will happen on the day the Page Experience Update goes live, this audit process will help you to get well prepared. Site owners that are able to meet the minimum thresholds are putting themselves at a distinct advantage in terms of search visibility.

Core Web Vital

By: Onward

We believe Core Web Vital will improve as experiences on the web get better, Core Web Vital helps make the web better for everyone. We hope that sharing our knowledge for the page experience updates and launching supporting tools ahead of time will help the diverse ecosystem of web creators, developers, and businesses to improve and deliver more amusing user experiences.

0 Comments